- Share

- Share on Facebook

- Share on LinkedIn

Equipe Langage, Research

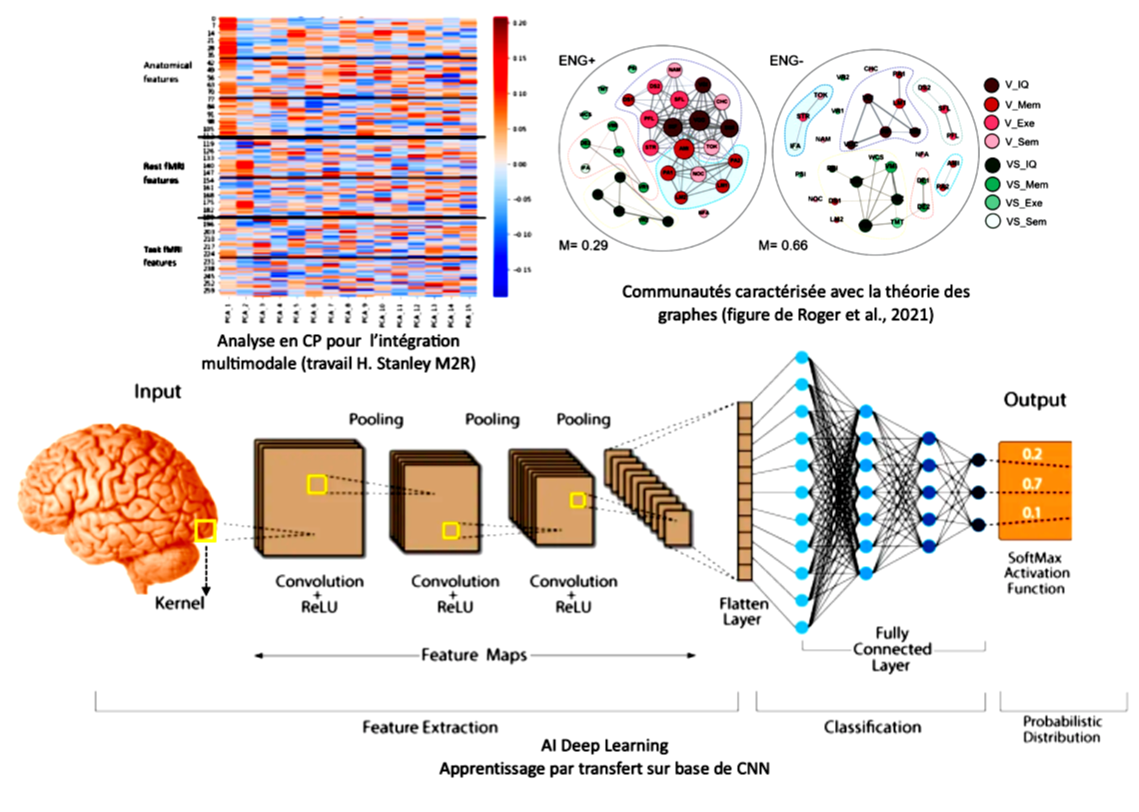

Inter-cognitive and transcognitive modeling of multimodal biomarkers, with artificial intelligence methods. Application to the unified language-union-memory framework (L∪M)

Monica BACIU

Martial MERMILLOD

Sophie ACHARD

This research work is located at the interface between language and declarative memory and addresses the question of their interactive union in a connectomic, multimodal and integrative perspective, using artificial intelligence methods. Integrating multimodal data to elucidate the processes and neural networks underlying cognition and behavior represents a major challenge in cognitive neuroscience. This is associated with a current paradigm shift, with recent neurocognitive models which consider that human behaviors are made possible by complex interactions between cognitive functions. Indeed, these new models assume that complex cognitive systems are shaped by interactions between processes and that functional integration and specialization are supported by a modular architecture. Given the complexity of these connectomic models which reveal new properties, not detectable if traditional classical models are considered, it is fundamental to combine multiple sources of data, both behavioral, cognitive and cerebral. Indeed, the combination of multimodal biomarkers which reflect the multiple facets of cognition and the brain will allow new properties of the system to emerge, intrinsically multimodal and invisible from a monomodal perspective. This is precisely the objective of this project, to consider language and declarative memory in this new interactive framework.

- Share

- Share on Facebook

- Share on LinkedIn